Introducing Codiris User Interview

Day 1 of Codiris Advent Calendar 🎄

If you walk into any product meeting in San Francisco, London, or Bangalore today, the energy is almost always the same. It is frantic. It is exciting. And it is entirely focused on the next thing.

“What if we added a collaborative mode?” “We need a dashboard update by Q3.” “The competitors just launched X, so we need Y.”

The main problem in product teams today isn’t the inability to build; it is the inability to prioritize. We are drowning in ideas but starved for validation. We treat the roadmap like a shopping list instead of a strategy.

The result? We become feature factories. We ship code that works perfectly for a problem nobody has.

The Rigor of “Why”

Before a single line of code is written, the most successful teams stop asking “How do we build this?” and start asking “Does the market actually care?”

This is where User Research moves from being a “nice-to-have” checkbox to the only leverage that matters. It is not about asking users what they want—users are terrible at predicting their future behavior. It is about understanding their current friction.

To do this effectively, you need to understand the toolset. You don’t use a hammer to turn a screw, and you don’t use a survey to understand deep motivation.

Screeners: This is your gatekeeper. Who are you actually talking to? If you are building for senior engineers, feedback from a junior marketing intern is not data; it’s noise.

Quantitative Questions: The “What” and “How Many.” These give you the breadth. They tell you 70% of users drop off at the signup page.

Qualitative Questions: The “Why.” This is the gold mine. This is where you find out why they drop off (e.g., “I didn’t trust the logo,” or “I didn’t know it was free”).

The Magic Number is 5

There is a prevalent myth that user research requires hundreds of hours and a PhD. It doesn’t.

Jakob Nielsen of the Nielsen Norman Group—the gold standard in UX research—famously proved the “5 User Rule.”

The data shows that 5 interviews are enough to uncover 85% of usability problems. By the time you talk to your fifth participant, you hit a saturation point. You stop hearing new problems and start hearing the same patterns repeated.

1 User gives you the first insights.

3 Users confirm the patterns.

5 Users solidify the data.

Beyond that, you encounter diminishing returns. This means you don’t need a massive budget; you need a rigorous process. You need to validate the problem before you attack the solution. And if you already have a product, usability tests are the single highest-ROI activity you can perform to unblock growth.

The Future of Validation

We know all this. Every Product Manager knows they should be doing research. So why don’t we?

Because it is hard.

Scheduling 5 interviews takes 50 emails. conducting them takes 5 hours. Synthesizing the notes takes another 5. It is a grind. So we skip it. We rely on “intuition” (which is usually just bias in a trench coat) and we build the feature anyway.

We added Codiris Interviews to break this cycle.

We believe that user research shouldn’t be a bottleneck; it should be a continuous stream of truth. Codiris isn’t a survey tool. It is an AI-native environment that connects user insights with your roadmap. conducts voice-to-voice interviews with your users at scale.

It handles the Screening, finding the right people.

It manages the Qualitative depth, asking follow-up “Why?” questions in a natural, human voice.

It delivers the Analysis, turning hours of conversation into clear, validated specs.

This week, we are launching our dedicated User Research capabilities. You can now validate 100 users in the time it usually takes to schedule one coffee chat.

Stop guessing. Stop building into the void. Start validating.

Let me show you how this works in practice.

All of this sounds reasonable in theory.

But the real question is: what does validation actually look like, day to day?

Here’s a concrete example.

I recently had an idea around LinkedIn not the feed, not content creation, but something much more basic:

how people actually find the right person and start a conversation.

Before building anything, I wanted to know whether this was a real problem or just my own frustration.

So instead of opening a coding tool, I opened Codiris Interviews.

Step 1: Start with a question, not a feature

I didn’t start with a solution.

I started with a question:

How do people use LinkedIn today when their only goal is to find and connect with a specific person?

That’s the problem I wanted to validate.

Step 2: Write the prompt in Codiris

Inside Codiris, I wrote a simple prompt:

Talk to people who regularly use LinkedIn to find talent, partners, investors, or customers.

Ask them how they currently search for people, what frustrates them in the process, and what they wish was simpler.

Follow up whenever they mention friction or workarounds.

No interview script.

No survey.

Just intent.

Codiris turns this into a real conversational flow.

You can also customize the interviewer itself.

In Codiris, you don’t just define questions, you add knowledge and context to the AI interviewer.

You can inject domain expertise, behavioral focus, and constraints so it understands the problem space before it starts talking.

This makes the AI interviewer more powerful than a human:

it stays objective, asks consistent follow-up “why?” questions, and never loses focus on the goal.

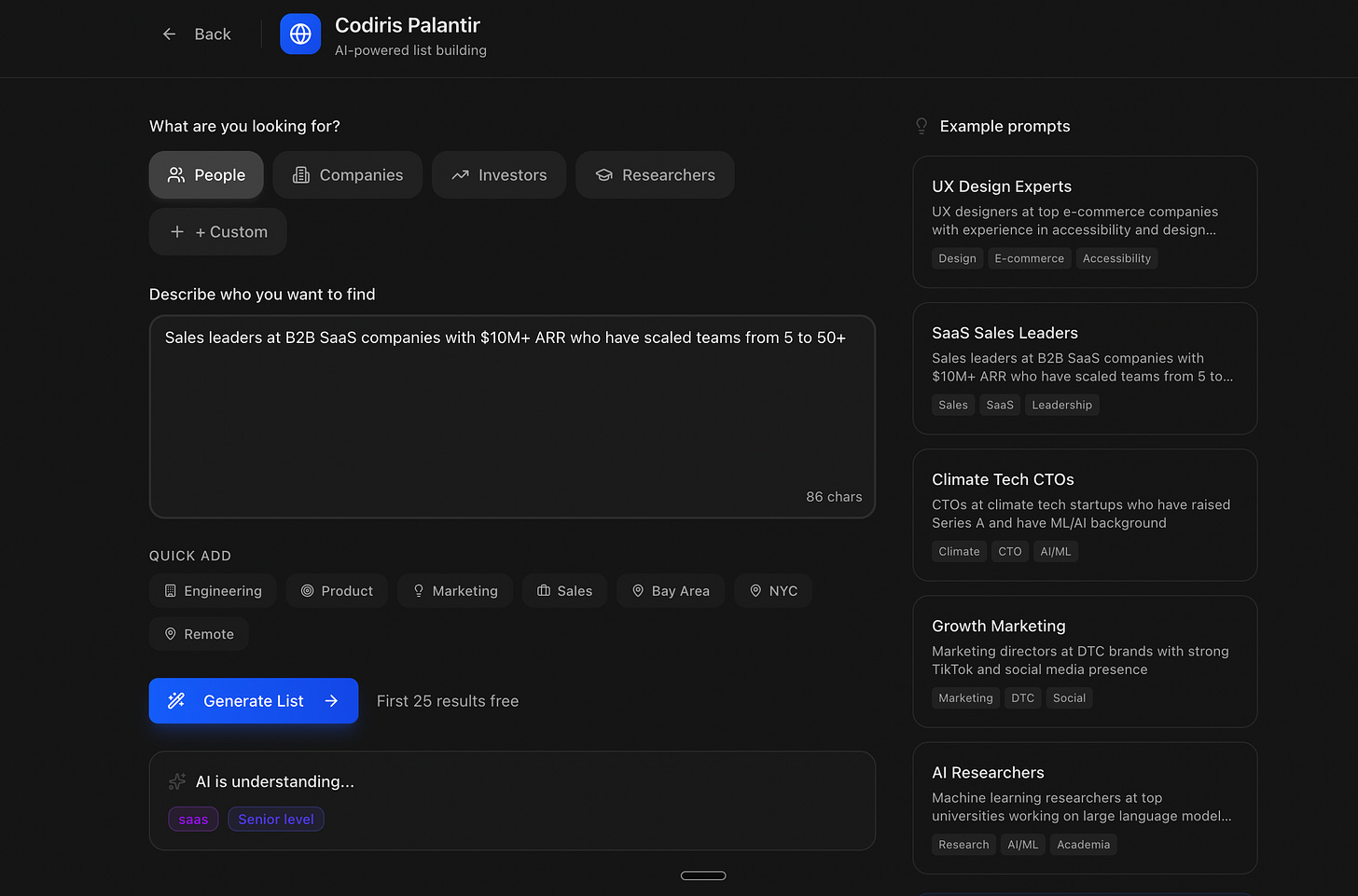

Step 3: Let Codiris find the right people

Instead of manually sourcing users or sending cold messages, I let Codiris handle the screening.

The goal wasn’t to talk to “everyone on LinkedIn.”

It was to talk to the right profiles.

In my case, two groups came up immediately as the most relevant:

Sales professionals

Recruiters

These are the people who use LinkedIn every day with a clear intent:

finding people, evaluating profiles, and starting conversations.

My objective was simple:

talk to as many of them as possible, w/here LinkedIn usage is both frequent and goal-oriented.

So instead of guessing where to find them, I used Codiris Palantir to search and identify the right participants.

I defined the criteria, and Codiris handled the rest:

Filtering users based on real usage patterns

Prioritizing people who actively search for profiles

Excluding low-signal users who only consume content

What normally takes days or weeks of manual outreach happened automatically.

At this point, I wasn’t just “interviewing users.”

I was talking to the exact people the product is built for.

That’s the difference between random feedback and real signal.

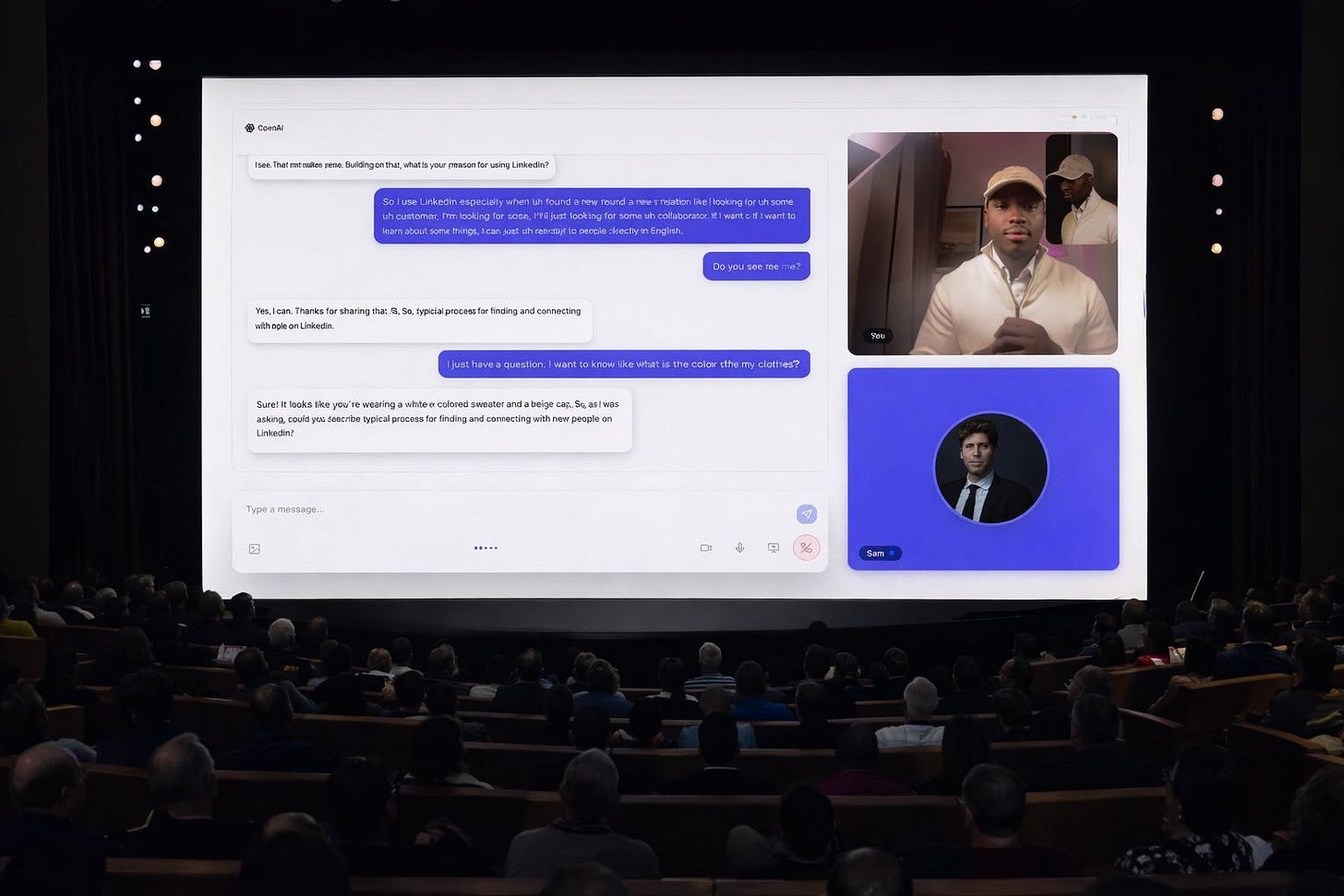

Step 4: Multimodal interviews

Codiris then conducted real conversations with those users.

Not forms.

Not multiple-choice questions.

Actual, voice-to-voice interviews.

It asked questions like:

“Why are you opening LinkedIn in the first place?”

“What’s the hardest part of finding the right person?”

“What do you do when search doesn’t give you what you need?”

When something interesting surfaced, Codiris followed up naturally:

“Why is that frustrating?”

“What did you try instead?”

This is where real insight emerges.

But these interviews aren’t limited to voice.

Codiris runs multimodal interviews, combining:

Voice: natural conversation

Text: links, written answers, clarifications

Screens or artifacts: users reacting to real interfaces, flows, or examples

For example, a user can:

Explain a workflow out loud

Paste an actual LinkedIn search they use

React to a screen or feature in real time

Codiris adapts its questions across modalities, following up based on what it hears, reads, and sees.

Step 5: From conversations to patterns

After the interviews, I didn’t receive raw transcripts, scattered notes, or hours of recordings to analyze manually.

Codiris automatically synthesized the conversations into structured insight.

What this means in practice is that Codiris:

Detects repeating pain points across different interviews

Groups similar statements into clear behavioral patterns

Attaches direct evidence (real user quotes) to each insight

Instead of anecdotes, I got validated signals.

For example, Codiris surfaced patterns like:

Users struggle to connect because response rates are low

Many connections are ignored because messages are perceived as sales-driven

People don’t want to browse feeds, they want to find one relevant person and start a conversation

Users are actively looking for better ways to improve connection quality and response rates

Each insight is backed by:

A clear explanation

An evidence count

Direct quotes from users (e.g. “People don’t answer; they think you’re there to sell”)

This is the critical shift.

At this point:

I wasn’t relying on my own interpretation

I wasn’t cherry-picking feedback

I wasn’t guessing what mattered

The signal emerged naturally from the data.

This is where user research stops being subjective and becomes decision-ready.

At this point, it wasn’t intuition anymore.

It was evidence.

Step 6: From insights to product decisions

This is the step where most teams fail.

They collect insights…

and then go back to building whatever they were planning to build anyway.

Codiris is designed to prevent that.

Once the patterns are clear, the next question becomes:

What should we not build?

What deserves focus right now?

Because the insights are already structured, Codiris makes this step explicit.

For each validated pattern, I can:

See who experiences the problem

Understand why it happens

Measure how often it appears

And evaluate how much it blocks the user’s goal

This turns qualitative insight into prioritization input.

In my case, the signal was clear:

People don’t struggle to find content

They struggle to get answers from the right people

The perception of sales intent kills response rates

Search works, but connection quality doesn’t

That immediately ruled out entire categories of features.

No feed improvements.

No content tools.

No engagement hacks.

Instead, it pointed to a much sharper problem space:

how to help people find the right person and start a conversation that actually gets a response.

At this point, building is no longer risky.

The problem is validated.

The audience is clear.

The success criteria are explicit.

This is where product development becomes confident instead of speculative.

You’re not shipping “features.”

You’re executing on evidence-backed decisions.

And that’s the real purpose of user research.

What’s next

This concludes Day 1 of our Codiris launch calendar: User Research & Interviews.

In Day 2, we’ll go one step further from validated insight to clear product specs and decisions, and how teams avoid falling back into the feature factory once research is done.

If you want to see Day 1 in action, including how Codiris runs user research end-to-end, you can watch the full walkthrough here:

👉

More coming tomorrow.

—

Joël